section 3 considers the power constraints in computation and communication required to operate such systems, as well as discuss power constrained cortical structure design. section 2 discusses computational complexity and the necessary programmability and configurability, utilizing the right set of dense features to make an efficient implementation. section 1 will discuss a framework for discussing large-scale neuromorphic systems. In the following sections, we will, in turn, discuss these aspects by focusing on key issues that effect this performance. Within our current grasp are circuits and technologies that can reach these large levels when researchers are building small prototypes, these issues must be considered to enable scaling to these larger levels. One conclusions drawn is that with current research capabilities, with additional research to transition these approaches to more typical IC and system building, that reaching a system at the scale of the human brain is quite possible. In addition, considerable time is spent discussing systems that can scale and how they will be able to scale to larger systems, both in IC process improvements, circuit approaches, as well as system level constraints. One focus is looking at what neural systems to date have a chance to scale to larger sizes, which is one metric of the particular implementation's merit going forward.

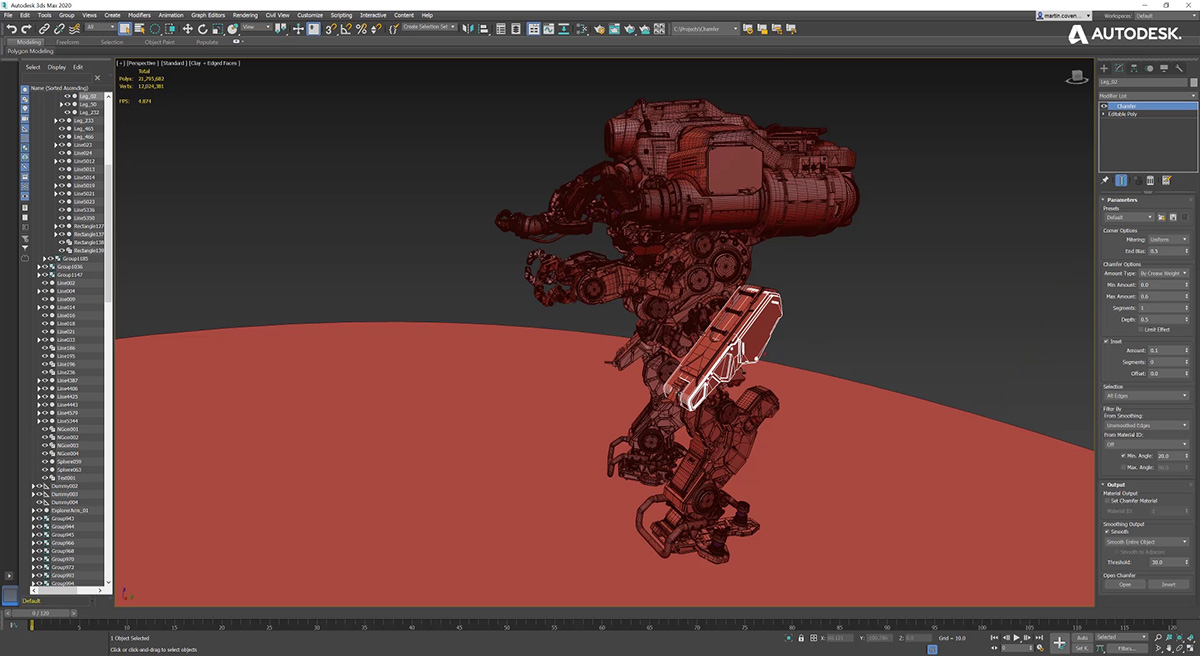

#3ds max 8 roadmapping how to#

Figure 1 show huge potential for neuromorphic systems, showing the community has a lot of room left for improvement, as well as potential directions on how to achieve these approaches with technology already being developed new technologies only improve the probability of this potential being reached. Computational power efficiency for biological systems is 8–9 orders of magnitude higher (better) than the power efficiency wall for digital computation one topic this paper will explore is that analog techniques at a 10 nm node can potentially reach this same level of biological computational efficiency. This comparison requires keeping communication local and low event rate, two properties seen in cortical structures. To ignore a long-term neuromorphic approach, such as depending solely on digital supercomputing techniques, is to ignore major contemporary issues such as system power, area, and cost and misses both application opportunities as well as misses utilizing the similarities between silicon and neurobiology to drive further modeling advances.įigure 1 shows the estimated peak computational energy efficiency for digital systems, analog signal processing, and potential neuromorphic hardware-based algorithms we discuss the details throughout this paper.

Given the community is making its first serious approaches toward large-scale neuromorphic hardware, a neuromorphic hardware roadmap could be seen as a way through the foreseen upcoming bottlenecks ( Marr et al., 2012) in computing performance, further enabling research and applications in these areas. The particular technology choice is flexible, although most research progress is built upon analog and digital IC technologies.

Neuromorphic engineering builds artificial systems utilizing basic nervous system operations implemented through bridging fundamental physics of the two mediums, enabling both superior synthetic application performance as well as physics and computation biological nervous systems knowledge.

What many people outside looking into the neuromorphic community want to see, as well as some even within the community, is the long-term technical potential and capability of these approaches.

A primary goal since the early days of neuromorphic hardware research has been to build large-scale systems, although only recently have enough technological breakthroughs been made to allow such visions to be possible.